What the Operator Knows That the Tooling Evangelist Doesn't

Lessons from running multi-account, multi-cloud Kubernetes deployments under compliance constraints — and why the showcase scenario isn't where the real problems live.

A few years into running a deployment platform that served hundreds of higher-education institutions across dozens of cloud accounts, the team hit a wall that no one had warned them about. Every environment — dev, staging, production, the regulated one for financial aid data, the one-off client instance behind a firewall — had its own deployment pipeline, its own credential store, its own failure modes. Every time someone added an environment, they weren't deploying software. They were deploying deployment infrastructure. The system that was supposed to reduce complexity was the fastest-growing source of it.

That experience — scaled across years, clients, and compliance regimes — is the backdrop for everything in this article. The patterns here are not theoretical. They are the conclusions that teams arrive at after the elegant demo architecture meets the full estate.

Reconciliation Is Not Orchestration

The GitOps pitch is clean: put your desired state in Git, let a controller reconcile the cluster to match. ArgoCD does this well. It answers one question continuously — "Does the live state match what Git says?" — and it answers it forever. Drift detection, self-healing, continuous reconciliation. For a single cluster with a stable config, it is genuinely elegant.

But reconciliation is not orchestration, and the difference only shows up when things get real.

A pipeline is a directed graph with state. It knows where it came from, where it's going, what happened at each step, and whether to continue or halt. It can stop. A reconciliation loop cannot stop — it can be set to manual sync, but that is a configuration state, not a halt condition. A subsequent automated commit can bypass it entirely.

Picture a deployment with 27 infrastructure modules in strict dependency order — networking first, then SQL instances, then Kubernetes clusters, then namespaces, then services, then application deployments, then CDN, then monitoring. Each module depends on outputs from the previous layer. Each layer's failure means everything downstream must halt, not reconcile. A reconciliation loop that re-applies the CDN module while the namespace module is failing doesn't heal anything — it generates noise that obscures the actual failure.

The systems that survive at scale converge on ordered deployment graphs independently. Module weights enforce sequencing. Placeholder resolution flows outputs from one layer into inputs for the next. Explicit halt conditions stop the line when a layer fails. That is a pipeline. It was always a pipeline.

# deployment-pipeline.yaml — ordered module graph with halt conditions

pipeline:

name: site-deploy

halt_on_failure: true

modules:

- name: networking

weight: 10

type: aws-vpc

outputs: [vpc_id, subnet_ids]

- name: sql

weight: 20

type: aws-rds

inputs: { vpc_id: "#{networking.vpc_id}" }

outputs: [db_endpoint]

- name: kubernetes

weight: 30

type: aws-eks

inputs: { subnet_ids: "#{networking.subnet_ids}" }

outputs: [cluster_endpoint, cluster_ca]

- name: namespaces

weight: 40

type: k8s-namespace

inputs: { cluster: "#{kubernetes.cluster_endpoint}" }

- name: application

weight: 50

type: k8s-deployment

inputs:

cluster: "#{kubernetes.cluster_endpoint}"

db_endpoint: "#{sql.db_endpoint}"

- name: cdn

weight: 120

type: aws-cloudfront-distribution

inputs: { origin: "#{application.service_url}" }

- name: monitoring

weight: 140

type: gcp-monitoring

inputs: { endpoints: ["#{application.service_url}", "#{cdn.distribution_url}"] }

Each module declares its weight and its inputs. The engine resolves #{...} placeholders from upstream outputs. If sql fails, everything at weight 30+ halts — there is nothing to reconcile downstream because the inputs don't exist yet.

Both reconciliation and orchestration are necessary. Neither replaces the other. The mistake is treating one as a sufficient substitute for the other because it handles the demo well. (For a deeper look at how these patterns play out across ArgoCD, GitOps promotion, and Octopus Deploy in multi-account estates, see Deployment Orchestration for Multi-Environment EKS.)

Environments Are Runtime Parameters, Not Git Paths

In the GitOps model, environments are Git paths — overlays/dev/, overlays/prod/ — each requiring its own reconciliation instance, its own deploy key, its own CI token. Adding a new environment means new credentials, often a new ArgoCD installation, sometimes an entirely new Git repository.

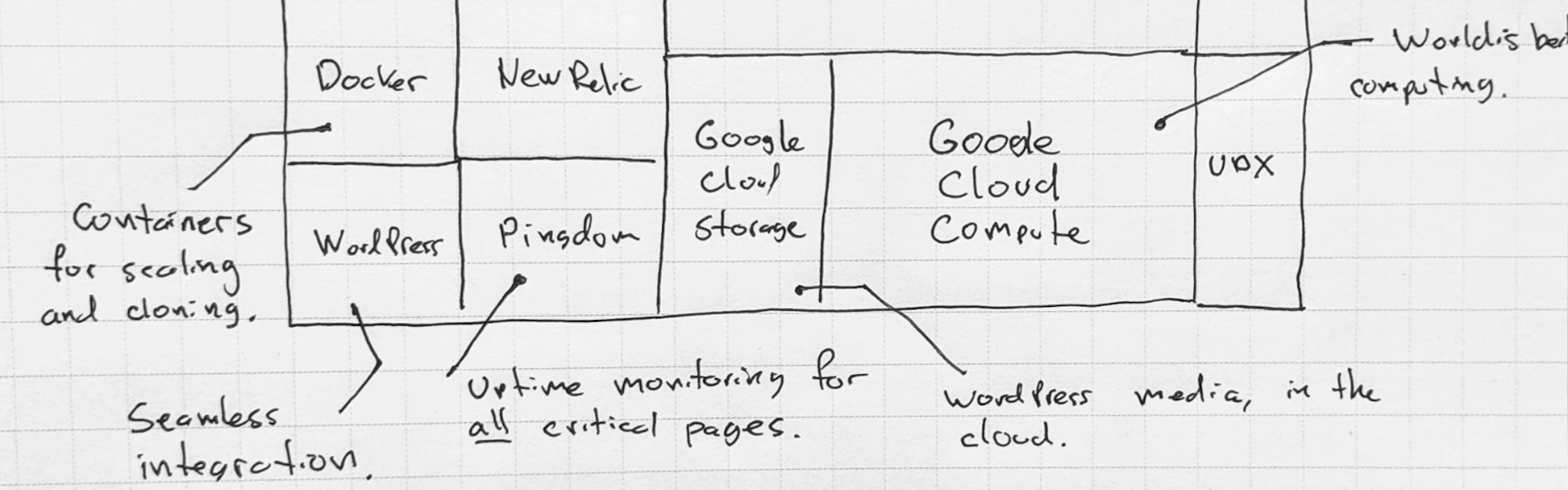

Teams that have managed 50, 100, or 300 environments across separate cloud accounts converge on a different model: environments as runtime parameters. The same module definitions apply everywhere. The difference between dev and prod is a set of variable substitutions — #{Environment}, #{Lifecycle}, #{Repository} — resolved at deploy time against the same module catalog.

This is not a cosmetic difference. It determines how operational complexity scales.

In the GitOps model, complexity is proportional to environments multiplied by workarounds per environment type. In the parameterized model, complexity is proportional to the number of modules in the catalog. Ten environments or a hundred — the catalog doesn't change. A site that needs three environments doesn't need three repos, three sets of deploy keys, or three ArgoCD installations. It needs three YAML files in a directory.

# sites/client-portal/environments/production.yaml

environment: production

lifecycle: long-lived

variables:

instance_type: m6i.xlarge

replicas: 3

db_instance_class: db.r6g.large

cdn_price_class: PriceClass_All

monitoring_alert_channel: "#ops-critical"

domain: portal.client.com

# sites/client-portal/environments/staging.yaml

environment: staging

lifecycle: long-lived

variables:

instance_type: t3.medium

replicas: 1

db_instance_class: db.t4g.medium

cdn_price_class: PriceClass_100

monitoring_alert_channel: "#ops-staging"

domain: staging-portal.client.com

# sites/client-portal/environments/dev.yaml

environment: dev

lifecycle: ephemeral

variables:

instance_type: t3.small

replicas: 1

db_instance_class: db.t4g.small

cdn_price_class: PriceClass_100

monitoring_alert_channel: "#dev"

domain: dev-portal.client.com

Adding a fourth environment — say, a demo instance for sales — is adding a fourth YAML file. No new pipeline, no new ArgoCD instance, no new deploy keys. The module catalog stays the same. The variables change.

The strongest implementations put environment configs in the application repo itself — not a separate infra-configs repo that developers have to cross-reference. A developer working on a site can see its production CloudFront config, its staging database config, and its dev monitoring config all in one place, versioned alongside the code those configs serve. The multi-repo coordination problem dissolves entirely.

The Self-Healing Blind Spot

Here is a scenario that happens more often than anyone admits: a pod is Running and Ready. The reconciliation controller reports healthy. And the service is silently failing — dropping requests, returning errors, connected to a stale database endpoint that was rotated two hours ago. The manifest matches Git perfectly. The system is broken.

ArgoCD's drift detection reports that the cluster matches Git. It does not report that the system is working. These are different questions, and conflating them creates a blind spot that no amount of self-healing can fix.

There is a deeper problem. ArgoCD runs inside the cluster it is evaluating. If the cluster is degraded — if nodes are under memory pressure, if the network is flapping, if the control plane is overloaded — ArgoCD is running in that degraded state and reporting from inside it. This is not independent verification. It is the evaluated system attesting to its own correctness.

Safety-critical engineering has a formal term for this: Independent Verification and Validation (IV&V). Aviation software standards (DO-178C) and automotive safety standards (ISO 26262) both require that the system verifying a component be structurally independent from the system that operates it — not just organizationally separate, but with no shared failure modes. A flight computer doesn't verify itself. A brake controller doesn't sign off on its own output.

The strongest deployment systems maintain three independent sources of truth: what was declared (the YAML configs), what was applied (the Terraform state or Kubernetes API server), and what is actually running (external probes hitting real endpoints from outside the cluster). When all three agree, the system is healthy. When any diverge, you know which layer failed — not just that something is wrong, but whether it's a config problem, an apply problem, or a runtime problem.

# health/verification.yaml — three-source-of-truth health check

verification:

declared:

source: git

ref: main

path: sites/client-portal/environments/production.yaml

check: sha256 of config matches last deployed snapshot

applied:

source: terraform-state

backend: s3://deployments/client-portal/production/terraform.tfstate

check: resource attributes match declared config values

resources:

- aws_cloudfront_distribution.main

- aws_route53_record.primary

- aws_ecs_service.app

running:

source: external-probes

check: synthetic requests from outside the cluster

probes:

- type: http

url: https://portal.client.com/healthz

expect: { status: 200, body_contains: "ok", latency_ms_max: 500 }

- type: dns

record: portal.client.com

expect: { cname: d1234.cloudfront.net }

- type: tls

host: portal.client.com

expect: { issuer: "Amazon", days_until_expiry_min: 30 }

When declared and applied agree but running fails, you have a runtime problem — the infra is correct but the application is broken. When declared and running agree but applied diverges, someone changed infrastructure outside the pipeline. Each combination points to a different root cause.

The Air-Gapped Cluster Is Not an Edge Case

The GitOps pull model requires the cluster to reach Git over HTTPS. When the cluster cannot reach Git — by design, not by misconfiguration — the model stops. Syncs fail silently. Drift goes undetected. The system diverges from its declared state with no automated correction.

The instinct is to treat this as a network problem to solve: add a GHES instance, peer the VPCs, set up a Git mirror. These are valid solutions when restricted access is the constraint. They are the wrong answer when the premise is "this cluster genuinely cannot and will not reach any Git endpoint."

Air-gapped environments are not edge cases in government, defense, and regulated industries. They are the baseline. A cluster in a classified enclave, a client-managed environment with strict egress controls, or a GovCloud deployment with no outbound internet is a hard constraint to design around, not a network problem to fix.

There are three models for reaching these environments:

Pull-based (ArgoCD) — the cluster reaches out to Git. Fails when Git is unreachable.

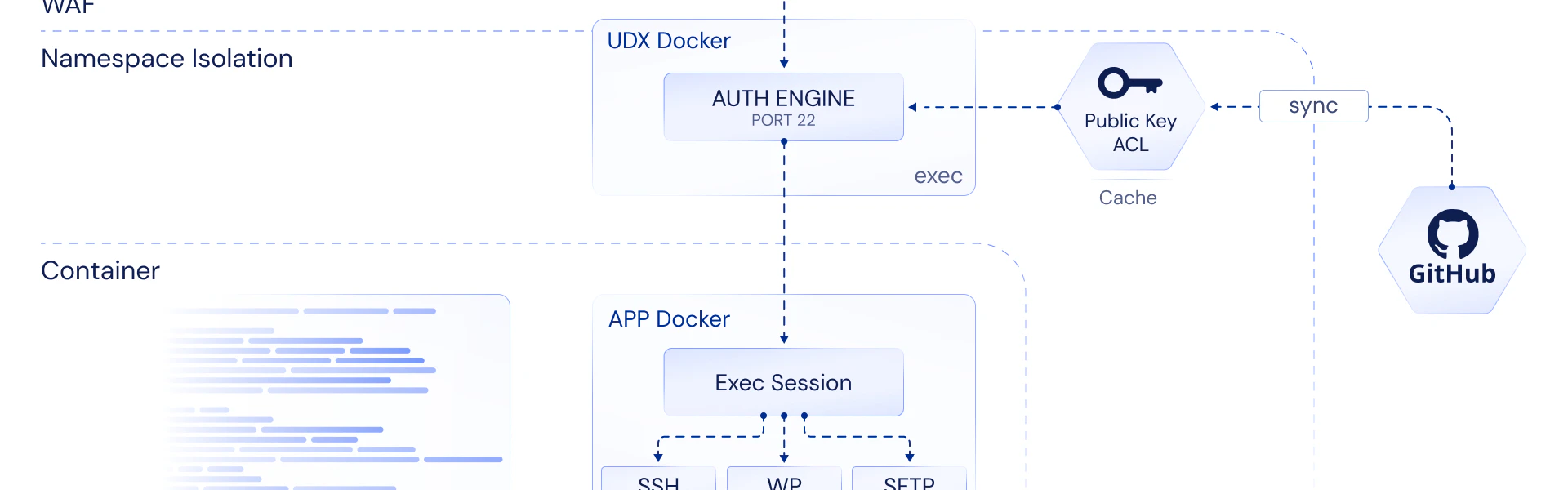

Push-based with agents — a lightweight agent inside the cluster initiates an outbound connection to an orchestration server. Works when the cluster can reach one known HTTPS endpoint, even if it can't reach Git.

Workflow-triggered — a CI/CD workflow runs entirely outside the cluster, authenticates via OIDC federation and role assumption, and applies changes remotely through cloud APIs. The cluster doesn't initiate anything. It doesn't know Git exists. The workflow is the actor; the cluster is the substrate.

The third model is how multi-account infrastructure actually gets deployed in practice. A GitHub Actions workflow assumes an IAM role via OIDC, chains into deployment roles in target accounts, and applies Terraform modules against those accounts' resources. (The mechanics of how OIDC federation, IRSA, Pod Identity, and cross-account STS role chains actually wire together inside EKS are detailed in Octopus Deploy on AWS.) The useful question is not "how do we get Git access into this cluster?" It is "why does this cluster need to reach Git at all?"

# .github/workflows/deploy-infrastructure.yaml

name: Deploy Infrastructure

on:

push:

branches: [main]

paths: ["sites/*/environments/**"]

permissions:

id-token: write # OIDC token for AWS STS

jobs:

deploy:

runs-on: ubuntu-latest

strategy:

matrix:

account:

- { name: dev, role: "arn:aws:iam::111111111111:role/deploy-infra", region: us-east-1 }

- { name: prod, role: "arn:aws:iam::222222222222:role/deploy-infra", region: us-east-1 }

- { name: govcloud, role: "arn:aws:iam::333333333333:role/deploy-infra", region: us-gov-west-1 }

steps:

- uses: actions/checkout@v4

- name: Assume deployment role via OIDC

uses: aws-actions/configure-aws-credentials@v4

with:

role-to-assume: ${{ matrix.account.role }}

aws-region: ${{ matrix.account.region }}

role-duration-seconds: 900

- name: Apply infrastructure modules

run: |

./deploy.sh \

--environment ${{ matrix.account.name }} \

--config sites/client-portal/environments/${{ matrix.account.name }}.yaml \

--halt-on-failure

The cluster is the target. GitHub Actions authenticates via OIDC federation — no stored credentials, no deploy keys, no agent inside the cluster. The GovCloud account deploys through the same workflow with a different role ARN. The air-gapped cluster never initiates a connection to anything.

Compliance Boundaries Reshape Architecture

A shared deploy key across two AWS accounts with different compliance classifications is a lateral movement vector. If the key is compromised — through a supply chain attack, a leaked CI secret, a compromised runner — an attacker can read the manifests for every environment that key has access to.

The instinct is to say "it's just read access." In a CMMC Level 2 environment, where the boundary between CUI-handling systems and non-CUI systems must be demonstrably enforced, "just read" is not a sufficient control for an assessor. The threat model is not "can the attacker modify?" It is "can the attacker learn the topology, the endpoints, the config patterns?" Read access to production manifests is reconnaissance.

The GitOps solution — separate repos, separate keys, separate tokens per environment — works but multiplies operational surface. Each new compliance boundary adds another repo, another credential set, another CI configuration.

There is a structural alternative that is both simpler and stronger: keep environment configs in the application repo, and enforce boundaries through credential scope. The workflow reads configs from directories within the repo it already has access to — infra_configs/production/, infra_configs/development/ — and applies them through short-lived, scoped STS credentials per step, per account. No shared deploy keys across account boundaries. No cross-repo access tokens. The compliance boundary is enforced by the credential scope, not by repo-level access controls.

# sites/client-portal/credentials.yaml — scoped credentials per environment

credentials:

production:

aws_account: "222222222222"

role: "arn:aws:iam::222222222222:role/site-deploy-production"

session_duration: 900

boundary_policy: "arn:aws:iam::222222222222:policy/production-boundary"

allowed_services: [cloudfront, route53, ecs, rds, secretsmanager]

staging:

aws_account: "111111111111"

role: "arn:aws:iam::111111111111:role/site-deploy-staging"

session_duration: 3600

allowed_services: [cloudfront, route53, ecs, rds, secretsmanager, s3]

govcloud:

aws_account: "333333333333"

role: "arn:aws:iam::333333333333:role/site-deploy-govcloud"

session_duration: 900

boundary_policy: "arn:aws:iam::333333333333:policy/cui-boundary"

allowed_services: [cloudfront, route53, ecs, rds]

require_mfa: true

Each environment gets its own role with its own permission boundary. The production role cannot touch staging resources. The GovCloud role has an additional MFA requirement and a tighter service scope. No shared credentials cross any account boundary — the structure enforces what policy documents promise.

Compliance constraints are not implementation details to be optimized away. They are requirements that reshape architecture. The team that designs for them from the start ends up with something cleaner than the team that bolts them on later.

The Unit of Deployment Is a Site, Not a Manifest

This is the decision that determines everything downstream, and it is rarely discussed because the answer is assumed: the unit of deployment is a Kubernetes manifest set. Everything else — DNS, CDN, SSL, monitoring, database instances — belongs to a separate pipeline.

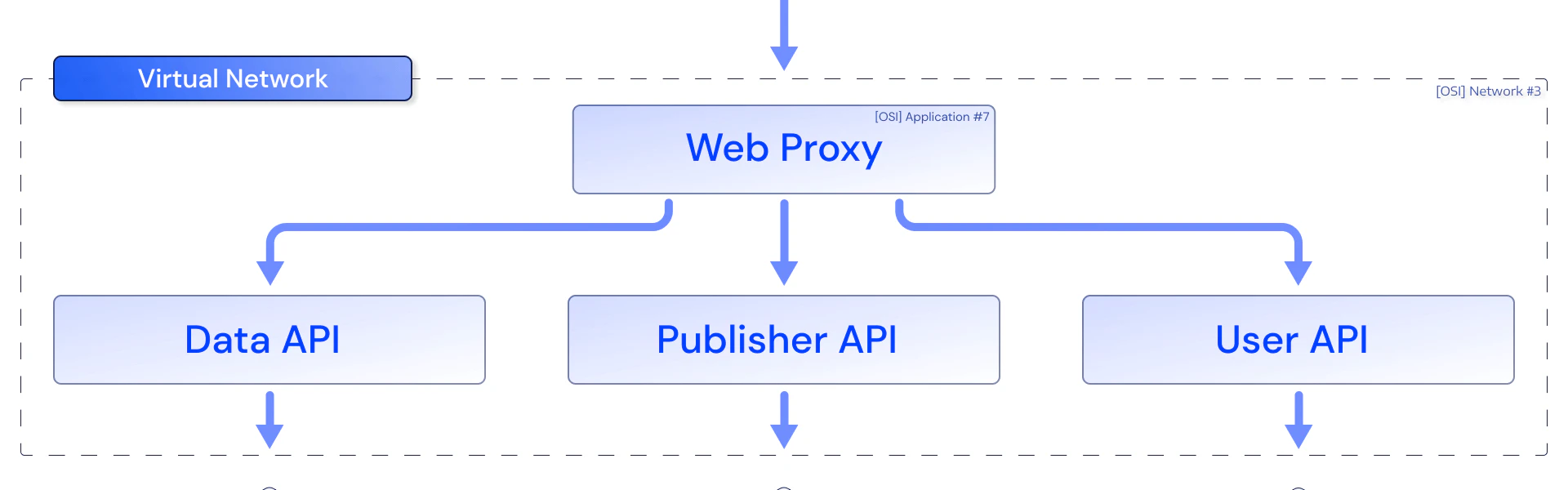

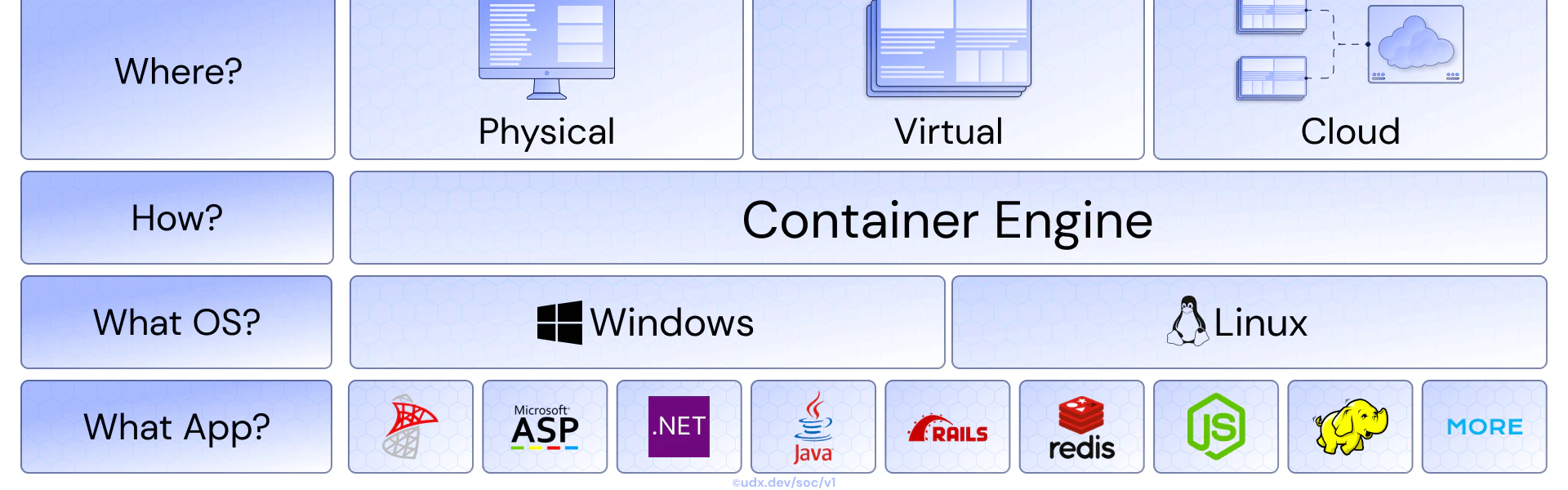

In practice, the unit of deployment for most organizations is a site: a complete, addressable service that includes application containers, networking, DNS, CDN, SSL, monitoring, and often a database. A site has environments. Each environment has its own CloudFront distribution, its own Route53 records, its own ACM certificate, its own monitoring config. The manifest set is one layer of the site, not the whole thing.

When the unit is a manifest set, adding CDN management means building a separate Terraform pipeline — separate state backend, separate credentials, separate promotion model. Two pipelines. Two sets of failure modes. Two places to look when something breaks at 2am.

When the unit is a site, everything is one pipeline. The module catalog includes k8s-deployment alongside aws-cloudfront-distribution and aws-route53 and gcp-monitoring. The site's config declares all the modules it needs. The workflow engine applies them in dependency order. Adding monitoring to a site is adding a YAML file, not building a new pipeline.

# sites/client-portal/site.yaml — the site is the unit of deployment

site:

name: client-portal

slug: client-portal

owner: platform-team

modules:

- type: aws-vpc

config: modules/networking.yaml

- type: aws-rds

config: modules/database.yaml

- type: aws-eks

config: modules/kubernetes.yaml

- type: k8s-deployment

config: modules/application.yaml

image: "#{Registry}/client-portal:#{GitSha}"

- type: aws-cloudfront-distribution

config: modules/cdn.yaml

origin: "#{k8s-deployment.service_url}"

aliases: ["#{domain}", "www.#{domain}"]

- type: aws-route53

config: modules/dns.yaml

records:

- name: "#{domain}"

type: A

alias: "#{aws-cloudfront-distribution.domain_name}"

- type: aws-acm

config: modules/ssl.yaml

domain: "#{domain}"

validation: dns

- type: gcp-monitoring

config: modules/monitoring.yaml

endpoints:

- "https://#{domain}/healthz"

- "https://#{domain}/api/status"

environments:

- path: environments/production.yaml

- path: environments/staging.yaml

- path: environments/dev.yaml

One file declares the entire site — application, infrastructure, CDN, DNS, SSL, monitoring. One pipeline applies it. When something breaks at 2am, there is one place to look. Adding a new capability is adding a module reference, not building a new pipeline.

This is the architectural decision that separates systems that scale gracefully from systems that accumulate operational surface with every new capability. Even legacy protocols like SFTP — still a hard requirement in many enterprise environments — fit cleanly into the site model when the gateway is built for Kubernetes rather than bolted on as a separate system. It determines how many pipelines you operate, how many state backends you manage, how many credential scopes you maintain, and how much context a new team member needs before they can safely deploy.

The Optimization Scope Problem

The common thread in all of this is optimization scope.

Tooling evangelism optimizes for the showcase scenario: a single cloud provider, internet-accessible clusters, a unified account structure, primarily Kubernetes workloads, a team with strong Git discipline. Within that scenario, the GitOps model is genuinely elegant. The demo works. The blog post writes itself.

Operators optimize for the full estate. Separate accounts. Air-gapped clusters. Cross-cloud deployments. Non-Kubernetes workloads. Compliance boundaries that cannot be satisfied with shared credentials. CDN and DNS that deploy alongside the application, not in a separate pipeline. Client-managed environments where you control the application but not the network.

The team that built the platform running hundreds of higher-ed institutions didn't start by choosing a tool. They started by enumerating the constraints: multi-account isolation, regulated data environments, clients who couldn't modify their network, infrastructure that spanned Kubernetes and cloud-native services in the same deployment. The tool choices — ordered deployment graphs, parameterized environments, site-level deployment units — followed from the constraints. The constraints were never optional.

The showcase scenario is a useful starting point. It is not a reliable ending point for anyone running production systems at scale under real constraints. And when the deployment pipeline is finally right, the next bottleneck is almost always iteration speed — the gap between a developer's thought and its validation in a real environment.

Evaluating Deployment Architecture

The standard evaluation asks whether a system handles the common case. The harder evaluation asks what the system costs — not in dollars, but in cognitive load, blast radius, and recovery time.

Blast radius is an architecture choice, not an incident metric. A deployment system where a bad config change propagates to every environment simultaneously has a fundamentally different risk profile than one where promotion is explicit and environments are structurally isolated. The question is not "how fast can we roll back?" It is "how many environments were affected before anyone knew?" Progressive delivery — canary releases, traffic shifting, automated rollback on error budgets — reduces blast radius for application changes. But infrastructure changes (a DNS record, a CloudFront behavior, an IAM policy) rarely have canary equivalents. If the deployment architecture treats infrastructure changes with the same blast radius as application changes, that is a design gap, not an acceptable tradeoff.

Time-to-first-deploy reveals what the documentation hides. The 2025 DORA State of DevOps report found that platform engineering capabilities most correlated with positive outcomes were those that gave clear feedback on deployment results and reduced the steps a developer needed to go from code to running service. The strongest signal is not deployment frequency — a metric that rewards small, frequent changes regardless of whether the system makes them easy or just tolerable. The strongest signal is how long it takes a new engineer, with no prior context, to deploy a change to a real environment. If the answer involves reading a wiki, requesting credentials from three teams, and understanding which of four pipelines applies to their service, the architecture has failed at the layer that matters most: approachability.

Recovery time is shaped before the incident starts. DORA's Failed Deployment Recovery Time metric measures the clock between "something broke" and "the fix is deployed." But the actual recovery experience is determined by architectural decisions made months earlier. Can the operator see what changed? Is there a single deployment record with the config snapshot, the approver, the timestamp, and the previous state — or does recovery require reconstructing the sequence from Git history, Terraform state, CloudWatch logs, and someone's memory? Systems that maintain structured deployment records with diffable config snapshots recover faster not because their operators are better, but because the architecture gives them something to work with.

The cognitive load test is the one most teams skip. Team Topologies introduced the distinction between intrinsic cognitive load (the complexity of the domain), extraneous cognitive load (the complexity of the tooling), and germane cognitive load (the learning that actually improves capability). A deployment architecture that requires developers to understand overlay directory structures, Kustomize patch semantics, ArgoCD sync waves, and the interaction between Helm values and environment-specific overrides is extraneous load — complexity that serves the tool, not the domain. The question is whether the architecture absorbs that complexity into the platform or distributes it to every team that deploys.

Measure what the system prevents, not just what it enables. Every deployment architecture enables deployments. The differentiator is what it prevents. Does it prevent a release from reaching production without passing through earlier environments — structurally, not by policy? Does it prevent credential reuse across compliance boundaries? Does it prevent a single pipeline failure from blocking unrelated services? The things a system makes impossible are more revealing than the things it makes possible, because prevention is structural and enablement is aspirational.

The operator who evaluates on these dimensions is not optimizing for elegance. They are optimizing for the moment when something goes wrong at 2am and the architecture either helps them recover or becomes the thing they have to recover from.